-

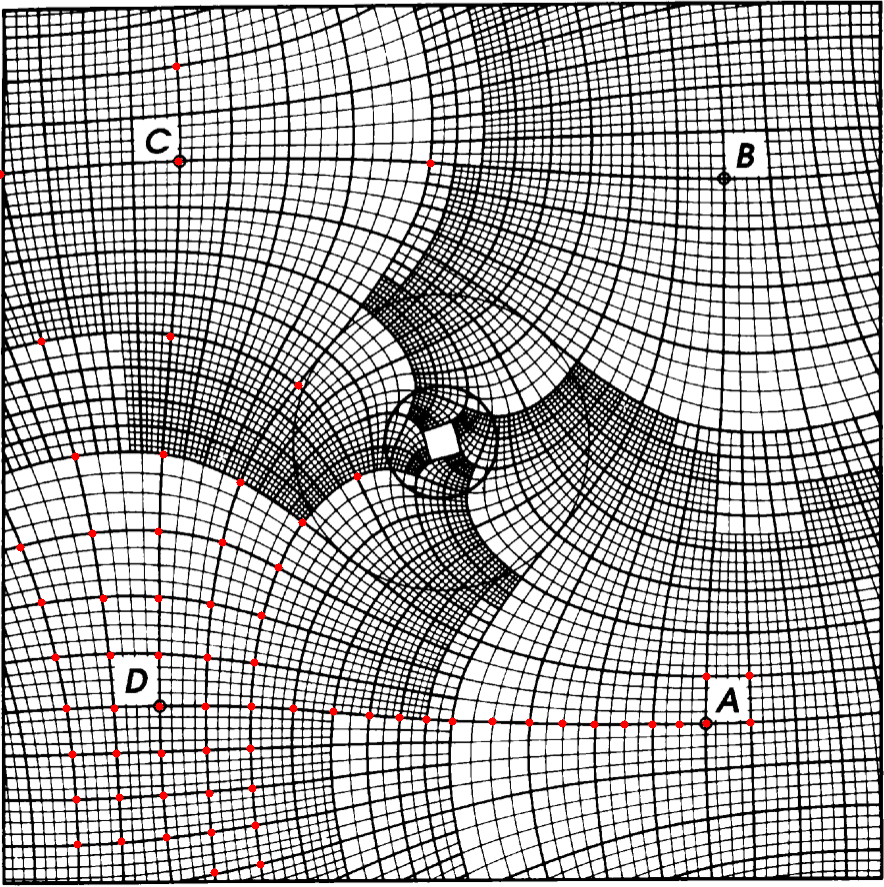

Working on a new project, which some might recognize. Claude is writing Python to push pixels around, and I’m doing the very tedious annotation you see in progress here:

-

So far “Claude Code gets confused and burns ‘usage’ going in circles when I ask it to do a refactor” is a pretty good proxy for when I haven’t actually thought clearly enough about the change I want to make. I do feel like I have sit on it though. Wish it was better about asking for help.

-

The productivity gain for $20 spent on Claude Code is hard to argue with, if it was just about money vs time. But I don’t really want Anthropic to ruin the environment and throw the global RAM supply chain into chaos to save me a few minutes. Wish there was more visibility into the actual impact

-

It’s a lot more fun to write Rust when AI is dealing with the semicolons, borrow-checker nagging, and all the other paper cuts (new paradigm: “Paper-cut Oriented Programming”?) I mean, I can write Rust. I have written Rust. But do I like writing Rust? Reading it I can just about deal with

-

Some thought after spending several hours with Claude Code on a new project…

-

I oppose the bombing of Iran today. I don’t believe it represents the will of the people, I dread the suffering and chaos it will unleash, and I’m somehow still, even now, dumbstruck at how brazen, cruel, and short-sighted my country’s leaders are capable of being. This is not who we are meant to be

-

Current

I’m intrigued by Current (via Daring Fireball), and I really enjoyed the essay that seems to have preceded it but…

This product claims to respect my time and attention, yet the page is so scroll-jacked and CSS-transitioned-up that I had to give it a couple of tries before I could bring myself to read to the bottom. Posts coming and going in a dynamic way might make sense in a reader app, but it does not for paragraphs on a web page, sir.

It’s paid up-front!? With versions for three platforms? How are they going to make enough to sustain this app? Is it even worth investing the time to try when it will inevitably go through a painful, soul-destroying transition to a subscription model sometime 2–3 years from now?

So I guess I’m giving it a pass for now but hopefully I’ve been hasty in my judgement and the next time it comes cross my RSS reader it will land better. Either way, it’s good to see RSS getting some love.

-

Study list for Spelling Bee: words of more than 4 letters, only 3 distinct

If you play Spelling Bee for a while, you’ll notice there are some words that keep coming up over and over. For example, there are lots of relatively long words that contain only three different letters, so they appear all the time. The other day I thought, “how many of these words can there be”, so I wrote a script: - download all the accepted words for the last two years (about 8300 words) - identify just the words that have more than 4 letters (so, higher scoring) and no more than 3 distinct letters

It turns out there are only about 140 such words, so as a public service I include them here:

abk: babka abm: mamba abn: banana abo: baobab abu: bubba aci: acacia acl: calla acn: cancan, canna aco: cacao, cocoa ade: added adm: madam ado: doodad adr: radar ady: daddy ael: allele afl: alfalfa agl: algal agm: gamma, magma ahl: halal aht: hatha akp: kappa alm: llama, mammal aln: annal alp: appall, palapa, papal aly: allay am: mamma amn: manna amo: momma amp: pampa ant: natant anw: wanna any: nanny aop: poppa aot: tattoo apw: papaw, pawpaw apy: papaya, pappy, yappy art: attar, ratatat, tartar ary: array aty: tatty bde: bedded, ebbed bel: belle bfo: boffo bho: boohoo bhu: hubbub bno: bonbon, bonobo bo: booboo bos: booboos, boobs bow: bowwow boy: booby cde: ceded cem: emcee chi: chichi cho: hooch cim: mimic cio: cocci cir: cirri civ: civic cno: cocoon cor: rococo de: deeded deg: edged, egged deh: heeded dei: eddied dek: deked dem: deemed, memed den: ended, needed dep: peeped, pepped deu: duded dew: wedded, weeded dgo: doggo dho: hoodoo div: vivid dmu: dumdum dov: voodoo efm: femme eft: effete egl: gelee eht: teeth, teethe ein: innie elm: melee elv: levee, level emz: mezze enp: penne ent: entente, tenet ept: teepee, tepee epv: peeve epw: peewee, pewee epy: peppy equ: queue etu: tutee etw: tweet flu: fluff gin: gigging, ginning, inning glo: googol hor: horror hot: tooth how: woohoo hoy: yoohoo ijn: jinni imn: minim ino: onion inp: pippin iny: ninny ipt: pipit itu: tutti koy: kooky lop: lollop lot: lotto mop: pompom mot: motto moy: mommy mru: murmur mu: muumuu muy: mummy, yummy opw: powwow opy: poppy ort: rotor puy: puppyHappy Solving!

-

Speculating about Apple Silicon

I know nothing beyond what’s been reported, but here’s my guess about where Apple’s high-end chips are going:

There’s no M4 Ultra because the next Mac Pro will get an M4 or M5 that supports multiple dies/packages and discrete RAM. To really pile on the CPU/GPU cores, they’ll be able to add more chips. Maybe several Maxs, or a variant with some new interconnect.

Presumably with some RAM packaged with each chip and a lot of magic to deal with the different memory latencies across the different processor and memory chips.

That’s how you build a truly scalable high-performance workstation for AI training as well as video/audio production.

-

Weather troll: unbelievably clear blue skies in upstate New York today, the day before the eclipse. Forecast for tomorrow doesn’t look so promising, but 🤞

-

PSA: Google Calendar on iPhone needs “Precise Location”

At some point the Google Calendar app on my iPhone stopped letting me search for locations when editing events. I could type in text, but would get no suggestions and would have to type/paste in an entire street address for it to do anything useful. Today I looked into it and after some experimenting, it looks like it was broken because I had turned off “precise location” for that app in Privacy settings. Because, why would my calendar need to know my precise location? In any case, turning it back on seems to have fixed it. And given that I’m putting the location on an event in order to eventually navigate there using Google Maps, I guess I’m not really exposing anything new. Still frustrating.

-

ML/AI is the long-awaited answer to the question “what are we going to do with all these transistors, since we can’t figure out how to write programs that do something useful with more than a handful of CPU cores?”

-

Source: gist.github.com/mosspresc…

-

⚽ Group G

-

⚽ Group H

-

⚽ Group E

-

⚽ Group F

-

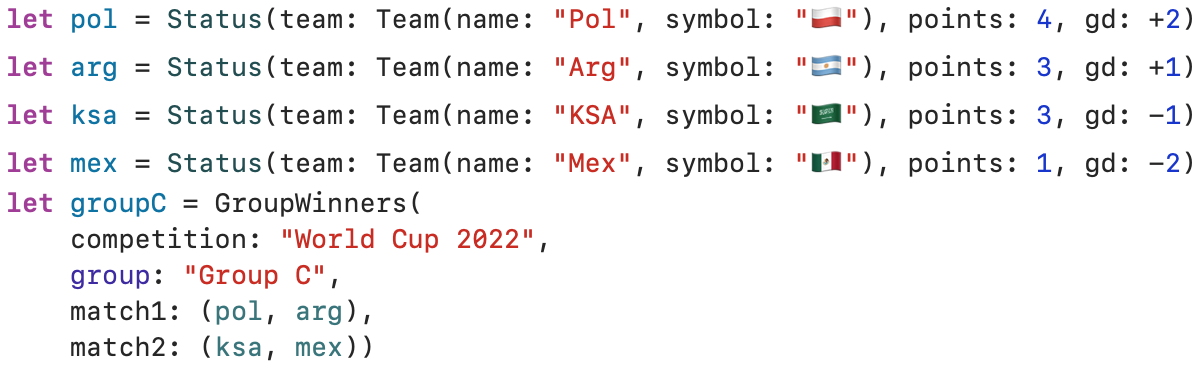

Group C

#worldcup ⚽

-

Watching the final games of the group stages, I can never keep track of the possible results in my head. So I made a quick and dirty viewer using SwiftUI:

-

Have we reached peak Zuckerberg? Where do we go from here? #holodeck #bondvillain

-

More Nand to Tetris: now self-contained

After a long break, I spent some time recently fleshing out my Python re-implementation of the remarkable From Nand to Tetris course. You can now compile and run Jack programs without downloading any other tools, and work through the entire course up to and including writing your own compiler for your CPU.

It’s still seriously good fun to build a (simulated) CPU from scratch in a couple of hours, and then see what it’s like to run an interactive program on it.

If you’re interested in learning about how an entire computer is built out of dumb electronic components, or you know how this stuff works and you want to play with your own designs, and if you like Python, check it out at github/mossprescott/pynand.

-

After decades(!) of watching from the sidelines, this year I’m putting some real time and energy into #WWDC2021. Feels good to be focusing on making great Mac software, which was my first love after all.

-

It’s on. #coffee

-

Font too big; DR

From the “Shouting into the wind” department: oversized body text is a menace on programming blogs I read. My theory is that these bloggers are running Linux at odd pixel densities (i.e. 1440p laptops) and they can’t read text at normal sizes, so they size everything up as a matter of course. Today I looked at the CSS on one and found “font-size: 1.2rem” which (I’ve now learned) means “make the body text 20% bigger than the reader wants it to be.” Please don’t do this. I might want to read what you have to say, but I often won’t have the patience to adjust my browser to accommodate your questionable life choices.

subscribe via RSS